Introduction

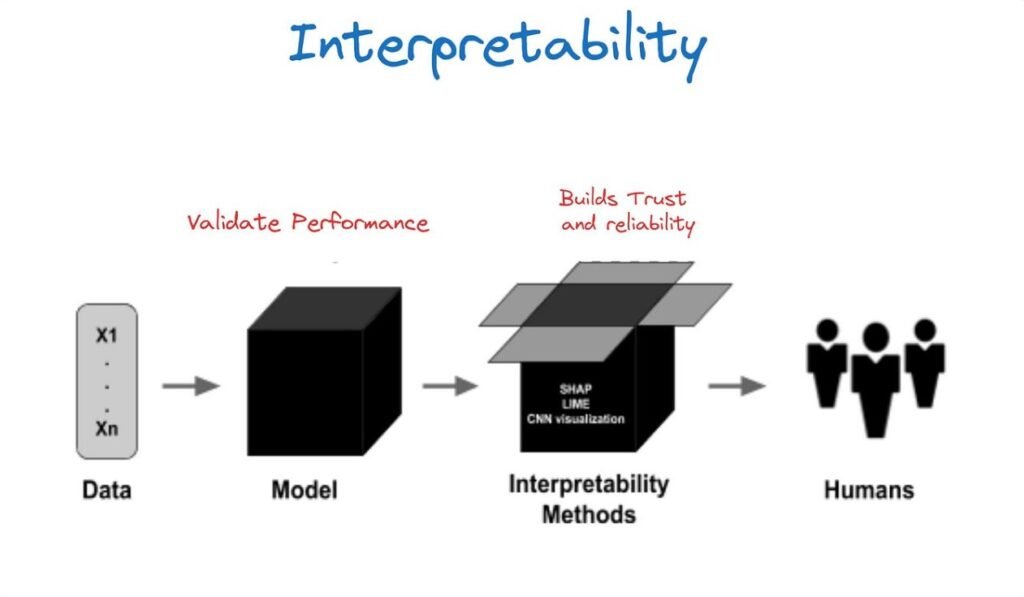

Machine learning models are often evaluated by accuracy, precision, recall, or error metrics. However, strong performance alone is not always enough. In many real-world settings—finance, healthcare, HR, insurance, and even marketing—teams must also understand why a model produced a particular prediction. This need is addressed by model interpretability: a set of methods that make model decisions more transparent and easier to justify.

Interpretability matters for trust, debugging, compliance, and responsible decision-making. If a model rejects a loan application, flags a transaction as fraud, or predicts patient risk, stakeholders want an explanation that is consistent and understandable. This is why learners in a data science course are increasingly introduced to interpretability tools alongside modelling and evaluation techniques.

Why Interpretability Is Essential in Practical ML

Interpretability supports several critical goals:

- Building trust with stakeholders: Business leaders and users are more likely to adopt model-driven decisions when they can see reasonable explanations.

- Debugging and improving models: Explanations can reveal data leakage, spurious correlations, or overreliance on a single feature.

- Meeting regulatory expectations: In some domains, organisations must justify automated decisions and demonstrate that models are fair and non-discriminatory.

- Reducing risk in deployment: Models that behave unpredictably are hard to monitor. Interpretability helps establish sanity checks and early warnings.

For example, if a churn model places high importance on “time since last purchase,” that is plausible. But if it heavily weights a field like “customer ID” or “postal code” without business justification, that signals a problem—either data leakage, bias, or an artefact in feature engineering. A structured data scientist course in Pune typically emphasises this type of practical reasoning because interpretability is most useful when tied to real operational decisions.

Local vs Global Explanations: Two Complementary Views

Interpretability methods generally fall into two categories:

Global interpretability

This explains overall model behaviour across the dataset. It answers questions like:

- Which features influence predictions most often?

- How does the model respond, on average, as a feature value increases or decreases?

- Are there interactions between features that drive outcomes?

Global explanations help with governance, documentation, and model reviews.

Local interpretability

This explains an individual prediction. It answers:

- Why did the model assign this specific user a high churn probability?

- Which features pushed the prediction up or down for this single case?

Local explanations are valuable for case-level decisioning, customer support, and exception handling. SHAP and LIME are widely used because they can provide local explanations for many model types, including complex black-box models.

LIME: Intuitive Local Explanations Through Approximation

LIME (Local Interpretable Model-agnostic Explanations) explains a model’s prediction by building a simple interpretable model around a single data point.

Here’s the core idea:

- Take the instance you want to explain.

- Create many slightly modified “neighbour” samples around it (perturbations).

- Ask the black-box model for predictions on those perturbed samples.

- Fit a simple model (often linear) that approximates the black-box behaviour near that point.

- Use the simple model’s weights to explain which features influenced the prediction.

Strengths of LIME

- Works with nearly any model (model-agnostic).

- Produces explanations that are easy to understand, especially for tabular and text tasks.

- Useful when you only need explanations for a small number of critical cases.

Limitations

- Explanations can be unstable if perturbations or sampling are not well controlled.

- The “local neighbourhood” may not represent realistic data points, especially in high-dimensional spaces.

- It provides approximation, not a guaranteed faithful decomposition of the original model.

In practice, LIME is often used for quick diagnosis and stakeholder-friendly explanations, but teams should validate stability by running it multiple times and checking consistency.

SHAP: Consistent Feature Contributions Using Shapley Values

SHAP (SHapley Additive exPlanations) is based on Shapley values from cooperative game theory. The concept treats each feature as a “player” contributing to a prediction. SHAP aims to fairly distribute the difference between the model’s baseline output and the final prediction across all features.

Key characteristics:

- SHAP values quantify how much each feature pushed the prediction higher or lower relative to a baseline.

- The explanations are additive: baseline + sum of feature contributions = prediction (for many implementations).

- It supports both local and global interpretability by aggregating SHAP values across many predictions.

Strengths of SHAP

- Strong theoretical grounding with useful consistency properties.

- Produces both local explanations (single prediction) and global insights (feature importance distributions).

- Many efficient implementations exist for tree-based models (e.g., gradient boosting), making it practical at scale.

Limitations

- Can be computationally expensive for certain model types, especially without optimised methods.

- Interpretation requires care when features are highly correlated; contributions may be shared in ways that confuse stakeholders.

- SHAP explains the model’s behaviour, not necessarily the true causal relationships in the world.

This last point is vital: interpretability methods describe what the model learned, which can include bias. That is why interpretability should be paired with fairness checks and careful feature selection—skills often covered in a data science course focused on real deployment readiness.

Practical Guidance for Using SHAP and LIME Effectively

To get reliable value from interpretability, consider these practices:

- Start with a clear question: Are you diagnosing model issues, explaining to users, or meeting governance needs?

- Use global and local together: Global insights guide model improvements; local insights support case-level decisions.

- Validate explanation stability: Re-run LIME explanations, compare across similar cases, and ensure outputs are consistent.

- Treat correlated features carefully: Consider grouping features, testing alternative encodings, or using domain knowledge to interpret results.

- Document explanation outputs: Store explanation summaries with model versions to support auditability and monitoring.

These habits are especially useful for professionals applying interpretability in business settings—one reason many learners seek structured training such as a data scientist course in Pune to connect theory with real use-cases.

Conclusion

Model interpretability helps teams understand and justify machine learning predictions, especially when models are complex or used in high-impact decisions. LIME provides approachable local explanations by approximating model behaviour near a specific instance, while SHAP offers a more consistent, theory-backed approach to attributing feature contributions. Used thoughtfully, these methods improve trust, debugging, governance, and responsible deployment.

For practitioners building practical ML skills through a data science course, interpretability is no longer optional—it is part of building models that are not only accurate, but also usable and accountable in the real world.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com